Search the Community

Showing results for tags 'lossy compression'.

-

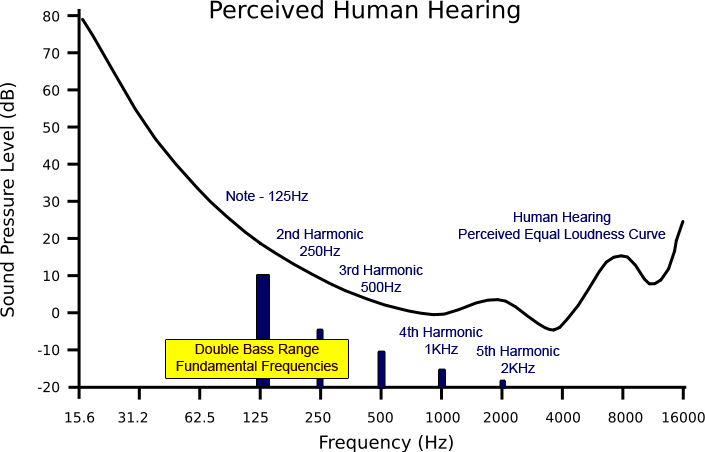

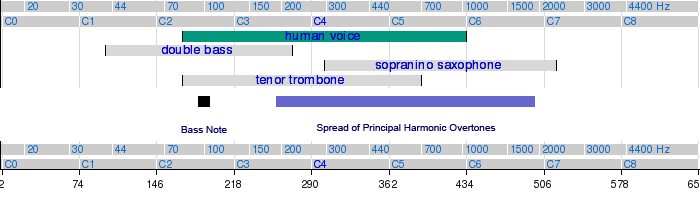

I was very intrigued by an earlier post on this forum where it was suggested that Bass lines would be particularly sensitive to the effects of compression using the pyscho-acoustic algorithms employed in MP3 encoding. Presumably this is because low levels of low frequency information are easily ‘masked’ by louder, higher frequency information in the material and thus are removed in the compression. I thought it might be interesting to take a look at that idea ..... So, simply put, from the diagram above, against the backdrop of an equal loudness plot of the human ear’s sensitivity, you can see the depiction of a louder sound ‘masking’ another sound. In these circumstances the louder sound obscures the quieter sound and in the compression of this sequence the quieter material will not be encoded and, thus, it’s ‘lost’ from the final compressed material – hence the term ‘lossy compression’, of which MP3 is one variant. Coming to the specific case of the bass instruments, I was keen to establish how its sound in particular might be impoverished in such compression. I guess the first thing to consider would be the frequencies involved and also how the harmonics of bass notes might be affected by the MP3 compression algorithms. In the diagram above you can see the fundamental note in the middle of a bass’s musical range and the notion of its quieter harmonics superimposed on an equal loudness curve. It’s important to remind ourselves here that an instrument’s ‘recognisability’ is a function of its musical range, its dynamics and in particular, its timbre. Timbre is what allows us to distinguish two instruments playing a note at the same pitch and loudness and it is due to the strength of each instrument’s production of harmonic overtones. All other things being equal, different harmonic overtone content results in different characteristic timbres. What we get from this, is that if you significantly alter the representation of a note’s harmonic content, it is very unlikely that you will faithfully represent the full timbre of the note and therefore the timbre of the particular instrument producing it. Of course, the fundamental frequency of the note dominates in loudness terms, so its frequency will give the game away in the case of a bass note, but it is quite possible that the full character of the bass note will not be rendered accurately if much of the harmonic content of the overall note is ‘masked’ out. In a full mix of instruments playing simultaneously, the acoustic or musical ‘space’ that a bass note’s distinguishing harmonic overtones occupy would fall in the mid-frequency range where that space is very likely occupied by louder fundamentals of other instruments’ notes. In this situation, it would be very likely that the bass note’s harmonic content would be ‘lost’. It’s interesting to look at a spectrum analysis of a double bass’s note – both bowed and plucked to appreciate the harmonic content. In the analysis below you can see that the harmonics, in the case of the bowed G note, are a very significant part of the note’s overall frequency content, which rises to well over 5Khz, and you can also see that they persist for quite some time after the leading edge of the note is passed. Plucking the bass note would appear to significantly damp the production of harmonic content above 1Khz and is likely due to the fact that the bow physically excites the string’s vibration for longer, allowing more harmonics to develop. Looking further into the harmonic content of bass notes, I analysed a track that has a lot of individual bass notes played in isolation and also passages where the same bass is part of a complex mix. The spectrum analysis above shows a single plucked double bass note from the track ‘Bass Suite No1’ from Avishai Cohen’s 1998 ‘Adama’ album. The left hand plot is from an MP3 CBR 128Kb/sec encoded by the LAME 3.98.4 encoder; the right hand plot is from the 16bit / 44.1 Khz WAV file, You can see as before the MP3’s low pass filter cut off at just above 16Khz. You can also see the relatively strong harmonic content of the single note in both the MP3 and the WAV plot. The WAV plot. on the right, shows harmonic content all the way up to 20Khz (the top of the spectrum stops at 22Khz). The amplitude of the note’s fundamental, at the very foot of the plot, has been over-scaled to allow the plot to illustrate the harmonic content. So, you can see that the harmonic content is significant. If we put these two traces together, MP3 and WAV, you see more readily see the comparison:- Now let’s look at a sliding single bass note. I have isolated this note in the time domain so we can easily see it’s spectral analysis in the mix:- Here, I have highlighted, in white, the fundamental and the first 15 Harmonics of the bass note as it slides up in frequency. You can see there is again significant harmonic content – in this case clearly visible all the way to nearly 2Khz. It’s slightly easier to see if I extract this note and it’s harmonic content into its own spectral layer and show the relative strength of the harmonics in 3D so you can see how each harmonic varies in amplitude over the life of the sliding bass note. You can also see the ‘bump’ of the fundamental at the bottom left of the plot:- Now let’s look at three notes in quick succession. Here is a plot of three bass notes plucked in quick succession, which have a sound like ‘DAAH-dit-Dummm’:- You can see again, in the WAV plot, that there is a strong fundamental in the first note. Less in the second, and almost as much in the third note as the first, but, there is also very strong harmonic content accompanying each note in the whole phrase. Just how strong these harmonics typically are can be seen in the next plot:- Considering the fundamental peaks at -22db and even the 10th Harmonic is still at -60db, it shows, since harmonics reduce in loudness as they increase in frequency, that the lower harmonics would have significant influence on the ‘character’ i.e the Timbre of the overall bass note and therefore preserving them would be essential when the bass notes are being ‘solo-ed’ as in this case. In the combined plot below you can see that the MP3 version of these three notes, apart from the content above 16Khz which has been removed by the encoders low pass filter, is pretty much identical to the original waveform in the WAV file:- In the combined plot above the green trace is the original WAV file content of the three notes and the slightly offset yellowish coloured trace is that of the compressed MP3 representation of the same notes. So, in the case of ‘solo-ed’ bass notes MP3 compression does a pretty good job. But what would happen if the bass notes were being played in the middle of a complex musical passage? This Adama track includes contributions played on the double bass, a soprano saxophone and a trombone, together with percussion instruments. Leaving out the percussion instruments, on the basis that their notes are more like impulse notes and of relatively short duration, we have a musical frequency ‘space’ which looks like this:- From this chart you can see that continuous notes played on the saxophone and the trombone would occupy the same frequency ‘space’ in the mix as the principal (loudest) harmonics of a typical, relatively short duration double bass note and therefore might very well mask those harmonics so that they would not be heard. In that case you might expect the MP3 compression’s psycho-acoustic algorithms to ‘lose’ the bass harmonics. The next spectral analysis shows a passage where, at the beginning of the time window, there is a bass note played pretty much in clear ‘space, and at the end of the window you can see another bass note played entirely on its own:- In the red highlighted box though, you can see a bass note that has been played at the same time as both wind instruments are playing a longer, louder phrase. In the red box you can just about also see the trace of the wind instruments notes’ true amplitude raised as a single line above the 3D spectral trace (the spectral trace of the wind instruments’ fundamentals and lower harmonics are deliberately clipping in this plot so that we can more easily see the overall content, which is why they appear to have flat tops in the lower frequencies). As before, the green traces are of the WAV file notes and the red show the slightly offset MP3 representations of the same notes immediately alongside. In the white box you can see, particularly in the centre of the image, how it appears that the bass’s harmonics are partially occluded by the wind instruments in the WAV trace but very significantly affected in the MP3 representation. It is difficult to see any of the bass’s harmonics represented at all in the MP3 companion trace in this more complex passage, whereas, in the areas where the Bass notes are on their own in the mix, the MP3 representation clearly matches the WAV file – see the note at the end of the time window. It’s perhaps easier to see the WAV / MP3 differences in this centre part of the passage where you can see multiple differences in the representations:- So, all in all, an interesting experiment which shows that where instruments are soloing or are the predominant sound in the mix, the MP3 algorithms do a pretty good job of representing fundamentals and principal harmonics. Where the mix is much more complex, however, the picture is not so clear, and the representation seemingly not so faithful to the original waveform. But that, in a nutshell, is precisely how ‘lossy’ compression works after all – it does not encode what the ear and brain will supposedly, according to the theory, not ‘hear’.

- 1 reply

-

- lossy compression

- mp3

-

(and 1 more)

Tagged with: